Being a user researcher at Essex County Council isn't just a new job for me, it’s a new discipline.

I’ve got a good knowledge of research practice from gathering and analysing information about the UK population for market research firms.

What’s new for me is observing users’ behaviour and building a picture of their needs rather than their expectations and what they want, as with traditional research.

Starting with the basics

The first step of my journey was a hefty one, all the way up to the GDS Academy in Newcastle. There, others like me gathered to learn how they could improve their services through research and testing.

We started with the basics, learning the theory behind this user-centred approach. There were some top tips for analysing your data and presenting your findings, which I’ll definitely be using in the future.

Gathering data

For me the most helpful things were the practical exercises we did in our groups. These took in all the essential steps for good user research:

- putting together user needs

- identifying your users

- writing discussion guides

- interviewing

- taking notes

- analysing the data pulling out insights and actions.

Usability testing

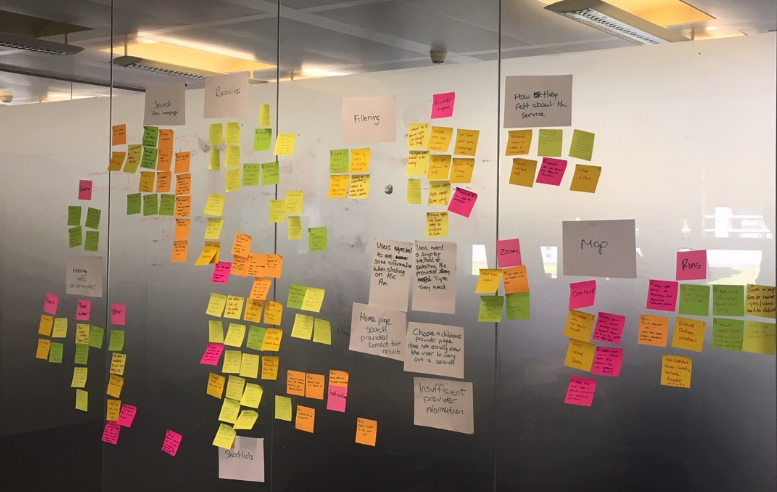

For this section of the course we tested Essex’s Find a childcare provider app and Blackburn’s report a broken street lamp app. These both seem like simple tasks but hide variables that can quickly throw an unsuspecting user off.

As user researchers, it often falls to us to fight for the user. This involves gathering data, lots of data. From just a 10 minute usability session, we managed to cover an entire glass wall with insights, outputs and actions.

Beating the bias

Data on its own isn’t enough. Like any other resource, the way we collate it and use it is important. We need to make sure we build an accurate picture of the users’ experience rather than picking the stats that fit our own frame of reference.

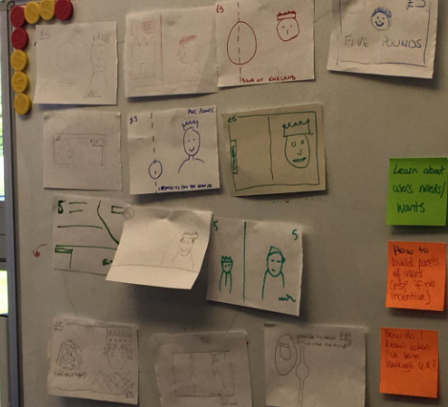

To illustrate this each of us were asked to draw a £5 note from memory. Some were elaborate and decorative, others simple and to the point. The lesson we took from this is that the users who give us most data shouldn’t outweigh those who gave less. When you’re interviewing people face-to-face, and building a rapport, it can be really tempting to delve into the richness of detail they give. Their input, however,t isn’t more valuable than someone else’s who may have given shorter, less detailed answers.

It’s our job to take a step back from these direct interactions with the users. We need to focus on the data, what the users actually did and not just what they said. Someone who tells you that they’re really good at doing things online might actually really struggle with bits of the journey you’re testing.

This is a big ask. You have to be empathetic, keeping in mind barriers facing the user, but also not lose sight of the issues that you're trying to spot.

Next steps

It was a really great experience, and I’ve shared what I’ve learned with our user research community of practice back here in Essex.

We’re hoping that we’ll be able to put the things we’ve learned and how they apply in Essex into a show and tell soon, so keep an eye out for that.

2 comments

Comment by Clare Reynolds posted on

This sounds like it was such a positive day and experience that you can take forward and develop within ECC.

Comment by John Newton posted on

Thanks Clare! We've got a show and tell on the 24 July in E1, so feel free to come along to find out more.